The message from Nvidia chief Jensen Huang at GTC this week is that AI is no longer about models or chips alone, but about monetizing inference at scale – where tokens become the core unit of value, and data centers evolve into revenue-generating factories.

In sum – what to know:

Token AI – Nvidia used GTC to shift the industry narrative from AI infrastructure to AI economics, with tokens as the commodity to define value, pricing, and competition.

AI engine – Its Blackwell platform brings massive gains (to be exceeded by Rubin) and paves a way for optimised systems (not raw compute) to define profitability.

Tiered AI – Tiered token delivery will see ‘AI factories’ monetize and enterprises maximize per-watt AI performance, setting a stage for a new AI operating model.

Lots of news out of Nvidia’s annual FTC shindig in San Diego; some of it is interesting – the telco-geared AI-RAN stuff with T-Mobile and Nokia, covered yesterday (plus its IoT work with AT&T and Cisco, announced today); the idea of a new category of “agent computers” (a likelier hit than AI glasses, surely); all the timely focus on physical AI, animated by agents running inference models at the edge. But honestly, it is hard to get your head around (20-plus news releases), and, really, today’s industry trends are yesterday’s roadmap items for Nvidia, and the biggest talking point at GTC is in the framing – which, these days, sets the whole tech scene.

As such, Jensen Huang’s talk during his GTC keynote about the firm’s Grace Blackwell CPU/GPU architecture, allied to its NVLink rack-scale wiring and FP4 tensor cores (plus “new algorithms” and “optimised kernels”), was most interesting. But there was some build-up, and a grand-standing sales pitch. Internally, 2025 was a “year of inference” for the firm, said Huang, which “drove this inflection point” – where, on one hand, it took crazy orders for Hopper GPUs from model builders and cloud providers, and made money hand over fist, and, on the other, could see it couldn’t last (demand was infinite, capacity was not), and took steps to reinvent its seminal Hopper architecture.

Huang said: “We dedicated everything to it. We took a giant chance – while Hopper was at its prime, and just cooking – to take it to the next level. We completely rearchitected the system, disaggregated it altogether, and created NVLink72. The way it’s built, manufactured, programmed has completely changed. It was a giant bet, and it wasn’t easy for our partners.” Cue some thanks, and applause. The current Blackwell combo delivers 50-times (!) throughput improvements over the Hopper platform, apparently; it processes ‘tokens’ at a rate of 5,000 per second, versus about 700 in a Hopper setup – and its forebear underpinned the whole shift to generative AI.

“Because a trillion dollars is an enormous amount… and you have to have complete confidence [your AI infrastructure] will be utilized – and performant and cost effective, and have useful-life for as long as you need… [Ours] is the only infrastructure in the world you can build anywhere in the world with complete confidence – in any cloud, any enterprise, any country,” said Huang. Nvidia’s Grace Blackwell architecture is “fungible for all of that”, he said, referencing multi-modal AI in every domain (“in language and biology, computer graphics, computer vision; in speech, proteins and chemicals, robotics”). Which makes Nvidia’ the “highest confidence platform”, he said.

Diverse usage

Sales pitch, see? But what a one. And this is about where Huang got into more-illuminating commentary, arguably, about the direction of travel; where Nvidia also told the world how to think about AI, and the world pricked up its ears. Sixty percent of Nvidia’s business is with the top five hyperscalers, including to migrate legacy enterprise workloads (internet search and content filtering); the rest is “just everywhere”, said Huang, listing regional, sovereign, and industrial cloud scenarios for any number of scientific and commercial applications. “The diversity of AI is also its resilience,” he said. Every inch of capacity will be used up; every dollar of investment will be maxed-out.

“No matter how large, no matter how quick, it will all be consumed,” he said. Which is where the AI version of the old tech pitch (faster / bigger / better) stopped – kind of – and ideas about a new AI world started. “This is where I torture all of you, but it’s too important,” said Huang. “Everybody’s looking for land and power. But once you build, you’re power-limited… Your workload is inference, your tokens are your commodity, and that compute is your revenue. So you want to make darn sure that architecture is optimized. In the future, every telecoms provider, computer company, cloud company, AI company – every company, period – is going to be thinking about token effectiveness.”

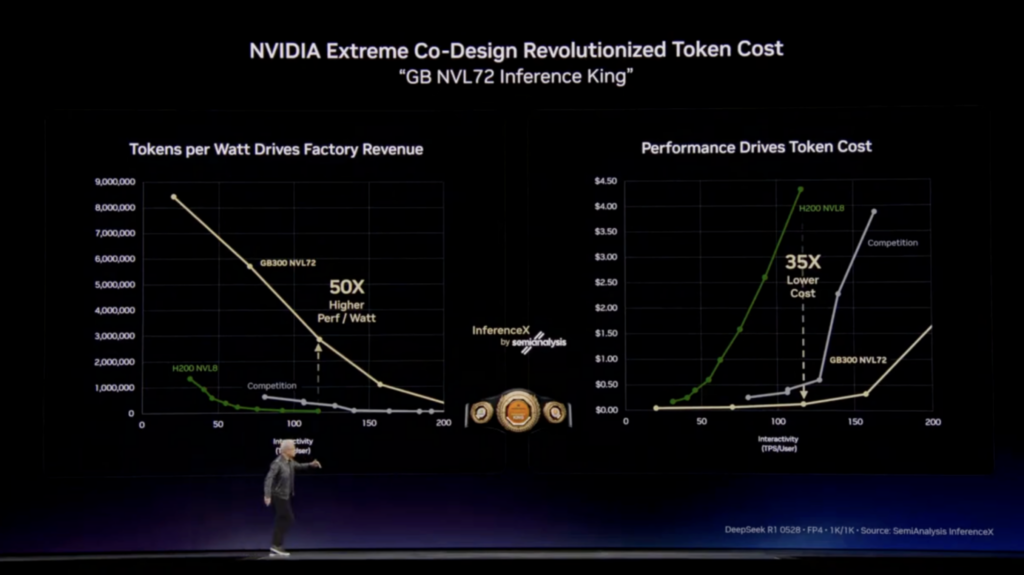

On stage, Huang stood in front of a chart (see below, left) showing the throughput (tokens per second at a fixed power level) on the vertical axis and the token speed (response rate per inference step) on the horizontal axis. “You watch, every CEO in the world will study their business from now in the way I’m about to describe – because this is your token factory; this is your AI factory; these are your revenues. There’s no question.” Data centers are no longer just compute hubs, but “AI factories” – per the new terminology – that produce tokens at scale, and measure efficiency in throughput, latency, and revenue per watt. Which is what every chief will scrutinize: token efficiency, as a core operating metric.

This is the crux, then: the AI token, this chunk of text or multi-modal input/output equivalent in a single inference operation, is a commodity. It should be and will be monetized, said Huang. The chart is overlaid with a performance rating for Grace Blackwell (plus NVLink72 wiring, plus FP4 tensor cores, plus new algorithms and kernels in “extreme co-design”), versus Grace Hopper and Nvidia’s “competition” – as reviewed by SemiAnalysis. “A one gigawatt factory will never become two – the laws of atoms, the laws of physics. So you want to drive the maximum number of tokens – the product of the factory. You want to be on top of that curve, as high as you can.”

Optimised wattage

He went on: “The faster the inference, the faster you respond; but the faster the inference, the larger the model – more context, more tokens. So the Y is the throughput and the X is the smartness. The smarter the AI, the lower the throughput. Makes sense; you’re thinking longer.” In other words, the calculation is to balance intelligence and output – when one trades against the other. A more capable model – one that reasons longer, pulls more context, generates richer responses – consumes more compute and produces fewer tokens. Speed and volume sacrifice depth; sophistication kills throughput. Hence, the case for the Grace Blackwell output score, 50-times more power per watt.

It is a stat from SemiAnalysis. Huang said: “Moore’s Law would’ve given us two-times, probably one-and-a-half. You could’ve expected that kind of a jump – versus Hopper H200. [But] nobody expected [50] times higher.” But how to monetize? Well, the same way as everything else. Huang has another chart, besides (above, right), that says its 50-times “inference king” performance scores also deliver 35-times better token cost (versus Hopper; a little less versus the “competition”) – on a sample measure of something-north of 200 tokens per second (TPS) on a small / efficient models (between seven and 13 billion parameters). “Our cost per token is the lowest in the world,” he said.

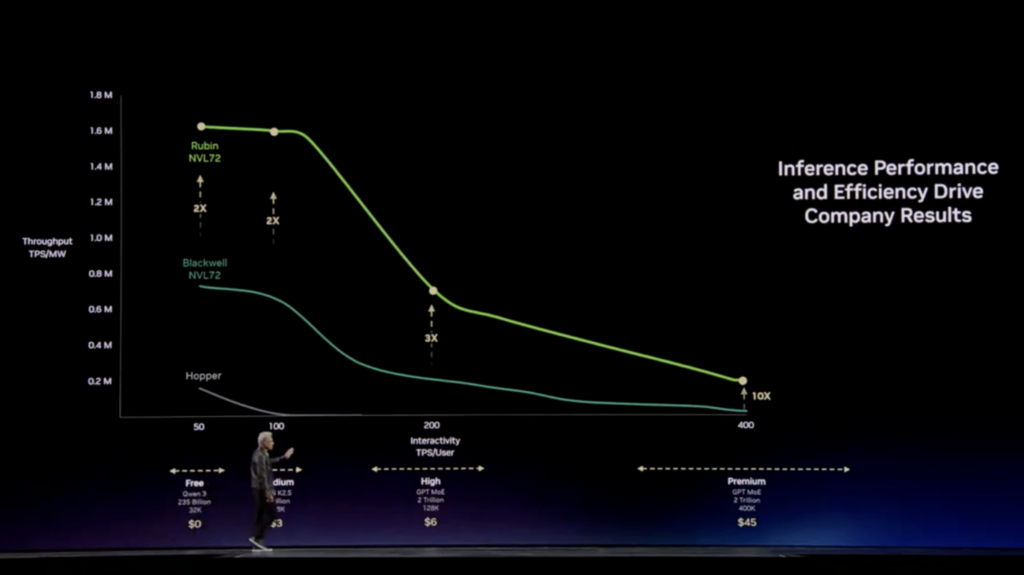

He restated the whole zoomed-out pitch. “I’ve said before, the wrong architecture, even if it’s free, is not cheap enough,” he said. “Because no matter what happens, you still have to build a gigawatt data center, and that factory, amortized for 15 years, is about $40 billion. Even when you put nothing in it, it’s $40 billion. So you better make for-darn sure you put the best computer system on that thing so you have the best token cost.” Huang showed another graph (see above), a little speculative, but also impactful – about how AI performance and efficiency will drive company results, and ultimately define how AI inference is charged and paid for. It grounds the whole 2026 discussion at GTC.

“This chart is what it’s all about,” said Huang. It is hypothetical, but looks completely reasonable, and as such, it includes a (green) line to show the real enterprise value that Nvidia’s incoming Vera Rubin (NVL72) replacement (“architected for every phase of agentic AI, advancing every pillar of computing”) might deliver, even versus Grace Blackwell (NVL72). The Vera Rubin platform – in trial with hyperscalers now, in the shops later this year – is geared for multi-modal model training, continuous inference, and tight GPU/CPU rack integration. It is the company’s new foundation for large-scale clusters in mega-sized gigawatt AI ‘factories’.

Tiered service

A marketing video says its delivers 3.6 EFLOPS at FP4 with 260 TB/s wall-to-wall NVLink – a “40 million times” advance on Nvidia’s original DGX-1 platform a decade ago, which featured eight Pascal GPUs delivering 170 TFLOPS and first-generation NVLink. Huang picked up again: “The token length, depending on the application, continues to grow – from maybe hundred thousand tokens to maybe millions. The token output length is growing as well. And all of this plays into the marketing and pricing of future tokens, ultimately. Tokens are the new commodity. And like all commodities, once it reaches inflection and matures, it will segment into different parts.”

To the monetization of AI tokens, then: Nvidia proposes tiered pricing based on throughput speed: from gratis (high throughput, low speed), as AI is consumed today, through medium ($3 per million), high ($6), and premium plans ($45) – and “maybe on day” to a premium package “because you’re in a critical path, or doing long research”. Huang said: “And $150 for a million tokens is just not a thing – 50 million tokens per day as a research team, at $150 per million. So we believe this is the future. This is where AI wants to go. This is where it is today (free), which is where it had to start to establish its value and usefulness. In the future, you will see services encompass all of that.”

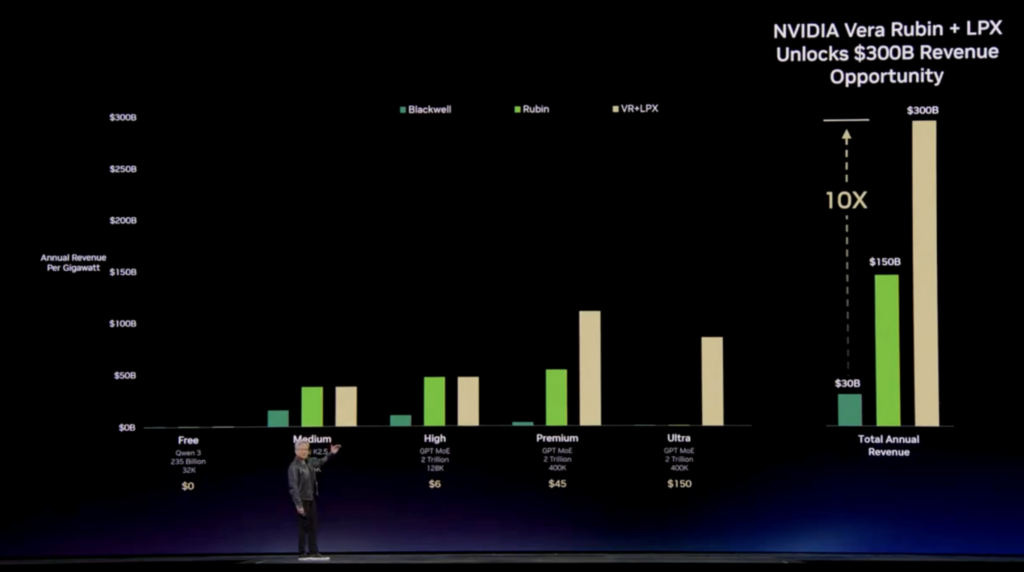

And then he went to a new graph (see above; follow the prompts), and returned to the sales pitch (as is his right): “This is Grace Blackwell, and this is Vera Rubin,” he said, and San Diego burst into a round of applause (eye roll). “Think what just happened. In every tier, we increased the throughput, and, in the (premium; 400 TPS; $45) tier – your highest ASP and most valuable segment – we increased it by 10-times (see right hand side, Blackwell vs Rubin NVL72). That (premium performance) is incredibly hard to do out here. This is the benefit of NVLink72, this is the benefit of extremely low latency, this is the benefit of extreme co-design – that we can shift the entire area up.

“What does it mean for customers? Suppose I take all of that, and multiply it again – suppose I take 25 percent of my power, and use it in the free tier; 25 percent in the medium tier; 25 percent in the high tier; and 25 percent in the premium tier. My data center has a gigawatt. So I get to decide how I want to distribute [the power]. The free tier lets me attract more customers; the premium tier allows me to serve my most valuable customers. And the combination, the product of all that, [brings] your revenues. The revenues you can generate with Vera Rubin – in this simplistic example – are five-times higher (versus Grace Blackwell; a $150 billion opportunity, says the slide).”

He finished up: “So, Vera Rubin – you should get there as soon as you can.”