Nvidia’s trillion-dollar AI infrastructure forecast set the tone at GTC yesterday, framing its AI-RAN partnerships with Nokia and T-Mobile (part of a $2tn industry) as a new frontier for low-latency inference at the edge.

In sum – what to know:

Robotics platform – Nvidia positions RAN as a future AI compute platform, turning cell sites into “robotic” nodes to optimize AI traffic and host inference workloads.

T-Mobile and Nokia – T-Mobile US is testing Nvidia’s edge-AI GPU servers (with GPUs) with Nokia’s RAN software, co-located at its RAN sites and nearby edge locations.

Private 5G dynamic – T-Mobile is targeting cities, utilities, industrial sites; the latter are a focus for the private 5G industry, which Nokia has quit and T-Mobile never served.

Nvidia hailed a new “era of trillion-dollar AI infrastructure” at GTC yesterday (March 16) – just for itself. Jensen Huang, founder and chief executive at the firm, told the firm’s annual AI ecosystem shindig in San Jose that it expects at least $1 trillion in demand for its Blackwell and Rubin GPUs through 2027 – which as a 100 percent advance on its 2026 projection, of $500 billion, made at GTC a year ago. In the end, the real 2027 figure will be even higher, suggested Huang. “I’m convinced the actual computing demand will be far greater than that.”

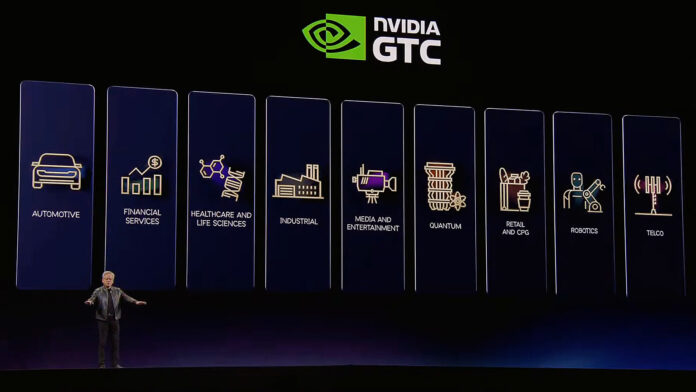

The story then – as Huang tells it, as everyone knows it – is that Nvidia is at the heart of the AI story. Its trillion-dollar forecast set the context for everything in Huang’s GTC address, which lasted over two hours, and was attended by at least 20 press announcements: about infrastructure building, model development, ecosystem building, enterprise adoption, plus a bunch more. Honestly, it is hard to get your head around – and you might see for yourself. For telecoms, there is AI-RAN work with T-Mobile, involving Nokia – whose $1 billion from Nvidia now defines its future strategy.

Let’s start with T-Mobile and Nokia, as a way into the rest of the Nvidia noise – which will be rounded-up in a separate post (if we have time). Huang told GTC: “Telecoms is about as large as the world’s IT industry – about $2 trillion. You see base stations everywhere; [they are] one of the world’s infrastructures; the infrastructure of the last generation of computing. That infrastructure will be completely reinvented. And the reason is very simple: that base station, [which has done] one thing [until now], is going to be an AI infrastructure platform [when] AI runs at the edge.”

He added: “Our platform is called Aerial AI RAN, [and we have a] big partnership with Nokia, [and a] big partnership with T-Mobile, and many others”. But there is some confusion, still, about the nature of accelerated RAN-based workloads in future – whether for steering and optimising RAN resources to support AI traffic, or for AI traffic to pitstop at the RAN edge so agents can run inference on their payloads. Nokia said at MWC that AI-RAN is mostly about the former: RAN optimization for AI traffic.

Later in his keynote, Huang appeared to say the same. “We have T-Mobile here. And the reason for that is that in the future, that radio tower, which used to be a radio tower, is going to be an Nvidia Aerial AI RAN – and so this is going to be a robotics radio tower, meaning it can reason about the traffic, figure out how to adjust its beamforming to save as much energy as possible and increase the amount of fidelity as possible,” he remarked. There was thing else about AI RAN in the GTC keynote.

But the press note about its AI-RAN project with T-Mobile and Nokia said more, suggesting AI inference workloads will run on Nvidia GPUs in the RAN, or adjacent to it in RAN sites or carrier-owned co-location facilities.

Specifically, Nvidia said it is working with T-Mobile (US) and Nokia to “bring physical AI applications over distributed edge AI networks”. For “distributed edge AI” read AI-RAN, as a “foundation for developers to deploy vision AI agents” – per the focus of Nvidia’s investment in the Finnish firm. And for AI-RAN running AI agents, read low-latency inference workloads, orchestrated in GPU clusters in or near the radio access network (RAN). They are targeting cities, utilities, and also “industrial worksites” – which is interesting, considering Nokia’s withdrawal from the ‘campus’ edge.

Indeed, the last part, about public RAN sites for AI at private worksites, crosses with the private-5G campus market’s essential MO. It also reinforces the pitch about dedicated public 5G slices for enterprises. It might be noted, as well, that T-Mobile is attempting to pull together a more-serious enterprise proposition around its public 5G SA infrastructure, to go up against the likes of AT&T and Verizon, notably – and that it lacks an equivalent private 5G play. Nokia, the one-time leader in private 5G, is backing the same horse.

T-Mobile was the first carrier in the US to test Nvidia’s AI-RAN infrastructure (ARC-Pro, using RTX 450/600 Blackwell Server Edition) with Nokia’s RAN software (anyRAN). It is now working with Nvidia-accredited physical AI developers (including Fogsphere, LinkerVision, Levatas, Vaidio, Siemens Energy) to demo how “cell sites and [also] mobile switching offices “can support distributed edge AI workloads”, alongside public 5G connectivity. They will variously integrate with Nvidia’s Metropolis Blueprint for video search and summarization (VSS) on the setup.

The latest version of Nvidia’s VSS (3) Blueprint brings multimodal visual understanding and agentic search, and is offered as a modular architecture that can be reworked for diverse environments (“from retail stores to warehouses”). Nvidia said there are 1.5 billion cameras in the world, and less than one percent of the video content they capture is reviewed by humans. It can “decompose complex natural language queries and search across video footage to find specific events in under five seconds”, and “summarize long-form video up to 100x faster than manual reviews”.

Caterpillar, KION, Hitachi, HCLTech, Siemens Energy, Tulip, and Telit Cinterion are using the VSS 3 product. Developers LinkerVision, Inchor, and Voxelmaps are testing integrated computer vision-based “city operations agents” and a digital twin to perceive, simulate, and optimize traffic light timing (classic smart-city use case, as old as the IoT hills) in the City of San Jose. They are targeting five-times faster incident response times, they said. Nvidia set the 5G SA edge-slicing setup against AI inference on Wi-Fi networks (always the whipping boy for mobile).

It stated: “While Wi-Fi is limited by reach and security, T-Mobile’s 5G SA network provides the wide-area coverage and guaranteed quality-of-service for complex AI agents to operate in busy city intersections, industrial facilities, and rural areas. This architecture enables physical AI to offload heavy computation from the device to the nearest edge location. Shifting heavy processing to the network edge allows developers to streamline hardware requirements for cameras and robots, to cost-effectively scale sophisticated AI models across billions of interconnected devices.”

Huang said: “Networks are evolving into the AI infrastructure enabling billions of devices – from vision AI agents to robots and autonomous vehicles – to see, hear and act in real time. By turning the 5G network into a distributed AI computer with T-Mobile and Nokia, we’re creating a scalable blueprint for the world’s edge AI infrastructure.”

Srini Gopalan, chief executive officer at T-Mobile, said: “Turning networks into distributed AI computing platforms to unlock the full potential of physical AI will require ultra-low latency and space time coherency at the network edge for billions of endpoints, and that’s what we’ve built at T-Mobile. With the first nationwide 5G SA and 5G Advanced (5G-A) network, we are uniquely positioned to help power a future where intelligent systems don’t wait on the cloud but rely on intelligent networks that allow them to act in real time.”