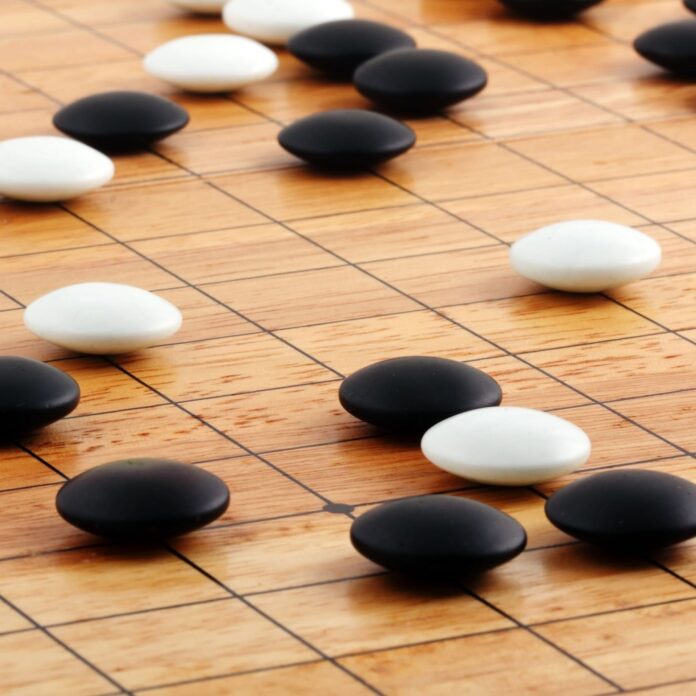

In October, AlphaGo beat three-time European Champion Fan Hui. Go, which reportedly emerged in China around 548 B.C., is a strategy game involving two players putting black and white pieces on a partitioned playing surface.

According to Google, “Go is a game of profound complexity. There are more possible positions in Go than there are atoms in the universe. That makes Go a googol [one followed by 100 zeros] times more complex than chess.”

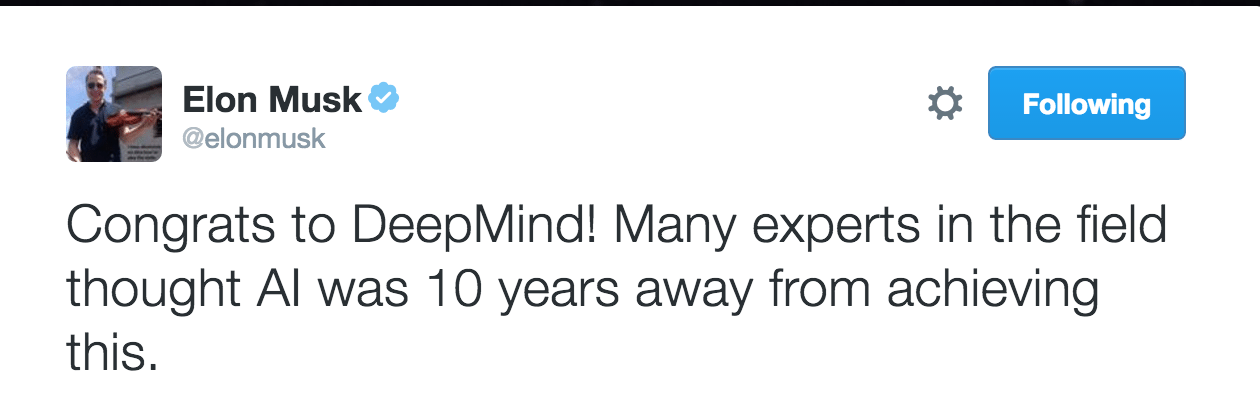

Futurist and entrepreneur Elon Musk reacted to an earlier AlphaGo victor on Twitter: “Congrats to DeepMind! Many experts in the field through AI was 10 years away from achieving this.”

Google acquired AI and neural network company DeepMind in 2014. Google DeepMind is the group that produced AlphaGo, which DeepMind Founder Demis Hassabis, now of Google, addressed in a blog post:

“Complexity is what makes Go hard for computers to play, and therefore an irresistible challenge to artificial intelligence researchers, who use games as a testing ground to invent smart, flexible algorithms that can tackle problems, sometimes in ways similar to humans. Traditional AI methods – which construct a search tree over all possible positions – don’t have a chance in Go. So when we set out to crack Go, we took a different approach. We built a system, AlphaGo, that combines an advanced tree search with deep neural networks. These neural networks take a description of the Go board as an input and process it through 12 different network layers containing millions of neuron-like connections. One neural network, the ‘policy network,’ selects the next move to play. The other neural network, the ‘value network,’ predicts the winner of the game.”