As AI workloads splinter across the fragmented cloud-infrastructure landspace, enterprises are scrambling to keep performance, governance, and costs in check. Equinix reckons the answer is in neutral interconnection hubs that simplify last-mile access, orchestrate distributed infrastructure, and bring inference closer to users.

In sum – what to know:

Distributed AI – Training, inference, and agent workloads are forcing enterprises into complex multi-cloud setups and putting interconnect, backbone, and access networks under pressure.

Neutral fabric – Equinix proposes a last-mile fabric to connect branch offices to cloud hubs, and a hub framework to provide a neutral platform to manage AI across infrastructure vendors.

Edge inference – While training remains centralised, growing agentic and inference workloads are moving into the cities, increasing the importance of interconnection and governance.

We wrote about this yesterday, briefly, at the top of the newsletter – about how AI is eating the data center, and how networks are on the hook. Central cloud infrastructure is teetering under the weight of spiralling workloads, and being distributed east-west into regional backwaters in search of power, and north-south in the metro centers and enterprise premises in an urgent quest to actually put AI to work. Enterprises are, suddenly, stitching together training in one cloud, inference in another, and agents at the edge – all without breaking performance budgets. Which is why networks matter more than ever. So here is the 12-inch remix, as told to RCR by US data centre provider Equinix.

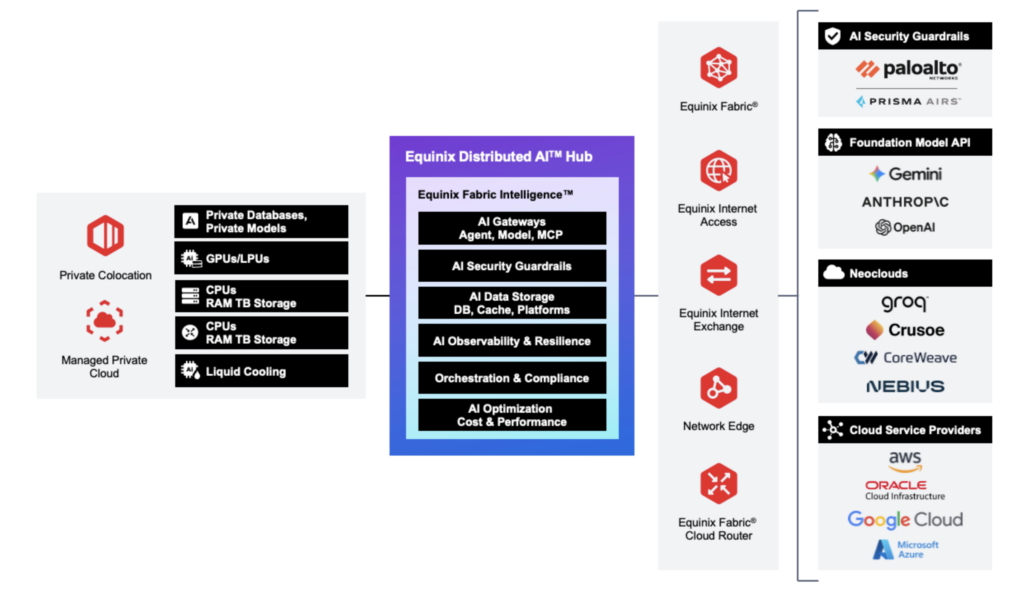

The conversation comes on the back of a couple of recent service announcements from the firm: a last-mile access service (Equinix Fabric), announced in January, and a related neutrality platform (Distributed AI Hub), announced yesterday (March 11). The point of both is to simplify the rapidly-fragmenting cloud/edge compute market as supply deals multiply and workloads cascade – whether to secure scarce-new GPU capacity for training or to serve new tough-new requirements for inferencing. The last-mile solution is designed to solve coverage and contract issues with telecom suppliers, mostly at enterprise branch offices, by aggregating and automating local connectivity services.

The other presents a “unified framework” to navigate the “maze of silos” being erected by the broad AI ecosystem as its technology has morphed, its application has multiplied, and its infrastructure has exploded and scattered. “AI isn’t centralized – but the right infrastructure can make it run as seamlessly as if it were.” That’s the line in its press release; the pitch from Equinix is that it has always been a neutral provider, hosting and shuffling enterprise data to and from big public hyperscale-clouds, as required – and now also, for 12 months at least, to a bunch of frontier model builders and a swarm of specialist neo-clouds.

The ‘fabric’ proposition makes the last telco-mile work more like a traditional hyper-scale cloud service, quicker to find and easier to deploy; the hub framework does the same, effectively, with the gross unwieldiness of the developing AI ecosystem so its fragmenting infrastructure scales as one – and so Equinix can reinforce its neutrality position. Arun Dev, vice president of digital interconnection at Equinix, is on the end of the line, and explains both. The process for an enterprise to manually sort last-mile connectivity with a telco – to an office in Omaha, Nebraska, for example – “could take months”, he says. Equinix serves 77 metro centers, but not Omaha – directly, anyway.

Network aggregator

The enterprise needs a local fix to get access into the distributed compute grid – likely into a facility along ‘data center alley’ in Ashburn, Virginia. Dev says: “You can set up cloud connectivity in minutes with basic skills. That’s what enterprises are used to. And then they have to deal with a network provider, and the quoting, ordering, provisioning takes weeks or months.

“They’ve got their cloud set up, and they’re waiting on these extra steps. It’s a lot of friction – Omaha to Ashburn, to a workload on AWS.” As such, Equinix is working with an aggregator platform from Resolute CS to automate the last-mile telco flow – with the likes of AT&T, Lumen, T-Mobile, Verizon, Zayo in the US.

“All the major providers are on the platform. Put in an address in Edinburgh and it shows the local carriers, and automates quoting and ordering. So we can meet customers where they are, anywhere – and simplify that [last-mile] experience for them.” The system has processed a “large number of quotes” so far, since January; “most” in APAC and in Europe, he says.

Equinix is building tighter first-party integrations, as well, with carriers in its “major markets” – so an enterprise might pick BT in the UK, say, to “gooffice-to-cloud, end-to-end – without the aggregator”. But even to AWS in Ashburn, the proposition is to converge at a local Equinix on-ramp facility, as a neutral interconnection hub.

Which is the rationale behind its other announcement, yesterday. Same logic, wider scope – that enterprises are spreading workloads across fragmented infrastructure: public and private, open and closed, international and national, central and local. Outside of the parochial access network, the challenge is about visibility and control across the whole piece – around the flow of data, amount of leakage, burden of compliance, application of guardrails. In the end, the everyway-impact on cost in such a fluid data architecture is hard to manage. “You know about shadow IT,” he says. “Well, shadow AI is real.” It is still about networks; just more about the orchestration between them.

Equinix calls its hub proposition a “neutral location that allows enterprises to discover, connect to, and consume AI infrastructure – including [from] model companies, GPU clouds, data platforms, network and security services”. These are delivered “all through private, low-latency connectivity” at its (280) data centers, it says. Dev does not say much about this “low-latency (inter)connectivity” – of the sort provided in programmable fiber backbone systems, as discussed in these pages recently in coverage of Verizon, Cisco, Nokia, Lumen, Ciena, plus all the stuff out of PTC – except to say the same providers on its aggregator platform are “in our facilities”.

Neutrality concept

Except also, that the network is more critical than ever. “That is where our deep partnerships with service providers come in,” he says. But the hub framework is more broadly about how infrastructure is governed and orchestrated – using its data centers and network fabric as a control point for distributed AI workloads. Its first integration is with Palo Alto Networks (Prisma AI) about governance and security across distributed systems; more will follow through the year. Hyperscaler marketplaces direct enterprises to hyperscale tools; the Equinix framework is vendor-neutral, giving customers freedom to compose their AI stack – which they are being forced to do anyway, argues Dev.

“The GPU shortage means they follow the supply, via a neo-cloud or another hyperscaler. So their workload is on cloud A and their GPU capabilities are in cloud B,” he says. As a point of note, the neutrality concept is based on the idea that enterprises meet the hyperscalers in shared facilities. Amazon Web Services (AWS), Microsoft Azure, Oracle Cloud Infrastructure (OCI), and Google Cloud (GCP) keep infrastructure in Equinix data centres in all its 77 ‘on-ramp’ metro stops – so a particular payload across a last-mile link in Omaha is properly directed in Ashburn, according to the work detail. More than 40 percent of global cloud on-ramps are in Equinix facilities, says Dev.

He goes on: “It drives this requirement to make distributed infrastructure work. Because your workload is split across locations. And if all of your data is in cloud A and all your compute is in cloud B, then you are moving data to and fro, and you get hit with egress speeds on both sides. So enterprises have decided the best thing is to have their data in a neutral location, and use the compute in A and the workflow in B via the interconnection piece. I mean, that’s the whole evolution over the last 10 years: going from where everything moved to the public cloud, to putting the right workload in the right place, exacerbated by a shortage of GPUs – and, now, figuring out how to make that work.”

But it is not just about GPU shortages in east-west interconnect systems for training workloads; AI agents are migrating north-south to the edge for inference tasks, also forcing enterprises to look for neutral-host metro sites. Analyst firm IDC reckons 80 percent of enterprises will deploy distributed edge infrastructure to improve the latency and responsiveness of AI applications as soon as 2027; Omdia says agentic AI projects will see 175 percent annual growth (CAGR) through 2029. “Customers say, ‘Hey, great, I’ve done my training, but my inference is at the metro edge’,” says Dev.

Metropolitan edge

Which is where Equinix is, we know – in 77 “major metro markets,” says Dev. He goes on: “We have a standardized footprint across the world. Our co-location footprint in Singapore looks the same in Ashburn, Frankfurt, everywhere.” As well, enterprises are not required to install physical infrastructure to expand to new regions. “We have a virtualized stack. So you don’t always have to deploy physical gear,” he says. “If you want to expand into India, say, you can deploy virtual capabilities from us in Mumbai. You’ll get the same experience you get in Ashburn and London.” For inference workloads in particular, enterprises are increasingly deploying virtual infrastructure across metropolitan markets rather than shipping equipment into every location.

Data sovereignty, amped-up by dicey geopolitics, feeds into this helter-skelter AI management, as well. Equinix offers “jurisdiction-aware” steering so traffic on virtual services and colocation routes stays inside borders. “We can keep all traffic in Canada, say, so nothing goes to the US.” As a rule, enterprises keep their largest physical deployments in hubs such as Ashburn or London, while using virtual network functions – firewalls, routers, cloud connectivity from the likes of Palo Alto Networks, Cisco, Juniper Networks, Zscaler, variously – to extend into harder-to-reach markets. “A large US bank was up and running with a virtual setup in Mumbai in under a week,” he says.

The question of where AI agents will take up residence is unsettled. “All of the above,” says Dev, when presented with all the stops on the cloud-edge continuum. For now, it depends on the service provider, and where their resources are distributed. “Anthropic runs where Anthropic runs; an open LLaMA model might be deployed on-prem; Mistral AI will route traffic through its locations in France. Which is why the complexity of the traffic flow has exploded – versus the scenario 10 years ago.” But AI service providers will also follow the science, ultimately, governing the three-way equilibrium between application requirements, technological innovation, and infrastructure viability.

But the logic is plain: large-scale model training might remain highly centralized (“you can do all your training in West Texas, where there are farms and nothing else”), but inference workloads will move closer to the action – in factory systems, retail applications, financial services with stricter response times. Equinix’s metro footprint – including into new countries like Thailand, Malaysia, Indonesia – supports the shift, says Dev.

He rounds off: “It used to be simple: get to your Equinix co-location, get to AWS, get to Azure. That was it. Now I’ve got a LLaMA instance, Anthropic over there, my own model here, a factory somewhere else. How do you have visibility? How do you build connectivity? That agentic traffic is increasing significantly – north-south, some east-west. These are smaller requests, but there are lots of them. The complexity has multiplied significantly.”