Introduction

Energy efficiency has become one of the most pressing operational and sustainability challenges facing mobile network operators. With base stations accounting for approximately 73% of total network energy consumption, the pressure to reduce power draw, without sacrificing performance has never been greater. The shift to Open RAN (O-RAN) architecture promises new flexibility for operators, but it also introduces a more disaggregated power profile that, until recently, has been poorly characterized in publicly available literature for actual RAN implementations beyond laboratory environments.

A joint research project between the Open Networking Foundation (ONF), Rutgers University’s Wireless Information Network Laboratory (WINLAB), and the Open RAN Center for Integration and Deployment (ORCID) Lab has set out to answer that question with real numbers. Funded by the U.S. government’s NTIA Public Wireless Supply Chain Innovation Fund, the team built and tested a full commercial O-RAN system, the kind an actual nationwide carrier would deploy, and measured its energy consumption in detail across 34 different operating scenarios. This article summarizes what they found. The full results are published in a February 2026 white paper (http://arxiv.org/abs/2603.04435).

A test site built to reflect the real world

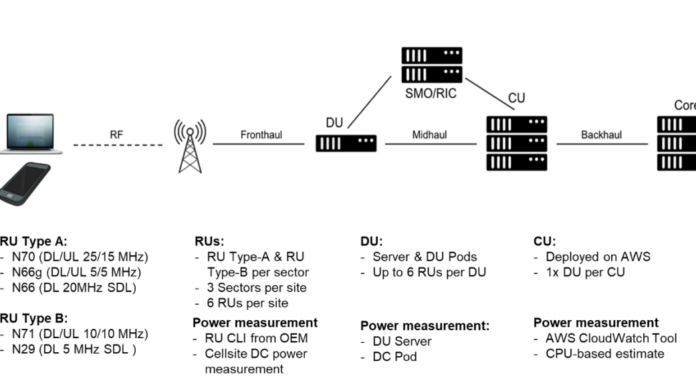

The ORCID Lab in Cheyenne, Wyoming, USA hosted a commercial O-RAN deployment configured the way a major U.S. carrier would actually build one, not the scaled-down equipment common in research environments. Six high-power, multi-band radio units were arranged in a three-sector layout typical of a cell tower serving a town or suburb, capable of delivering more than 1,800 Mb/s across 12 cells simultaneously. The Distributed Unit ran on dedicated server hardware on-site; the Centralized Unit ran on Amazon Web Services.

Power was measured in three ways: an external precision meter as ground truth, a clamp meter for cross-checking, and the radios’ own built-in reporting tools. That last point yielded an early practical finding: the radios’ self-reported figures were accurate to within 10% of the external measurement, meaning operators can monitor energy use through their existing network management software without installing additional meters at every site.

The biggest surprise: The radio burns power even when it’s doing nothing

The most important finding upends a common assumption: radio units consume most of their power simply by being switched on, regardless of how much data they are sending.

A single radio with its primary band active but carrying no traffic draws around 207 watts continuously, 152 watts for internal electronics and 55 watts just to keep the radio frequency chain ready to transmit. Activate a second frequency band and that idle cost rises to 291 watts, before a single byte has been sent. When the radio is actually working hard, delivering 440 Mb/s of data, total consumption rises to only 268 watts. Transmission adds about 60 watts on top of a 207-watt floor, indicating that the cost of readiness dwarfs the cost of delivery (though power increase is significant at higher RF power outputs).

The practical consequences are stark. Cutting traffic in half reduced total system power by just 2.5%. Cutting to 30% reduced it by less than 5%. But energy efficiency (that, useful data delivered per watt), fell by 68%. The system was spending nearly the same electricity to do a fraction of the work. The same pattern held under poor signal conditions: when a phone at the cell edge could only receive 75 Mb/s instead of 440, power consumption was completely unchanged. Poor coverage is an energy efficiency problem as much as a service quality one.

The conclusion is straightforward. Without features that physically power down idle radio hardware during quiet periods (like sleep modes, carrier shutdown, and antenna scaling), a network runs at near peak energy cost for most of the day. For a technology that is often sold on efficiency grounds, that is a significant gap between promise and reality.

More radios, better efficiency: Why bigger sites use less energy per bit

A second finding goes against instinct: deploying more radios at a site actually improves energy efficiency. The reason is shared overhead. The server and cloud infrastructure together consume roughly 515 watts continuously regardless of how many radios are connected or how much traffic is flowing, like rent on a building that has to be paid whether one person is working there or fifty. Spread across one radio serving two cells, that overhead dominates the energy cost of every megabit delivered. Spread across six radios serving twelve cells, it shrinks to a much smaller fraction.

In practice, expanding from one radio to six increased throughput 3.65 times while total power grew only 2.86 times, a 28% improvement in energy efficiency. For operators planning new sites, building to the full three-sector configuration from the start delivers better energy economics than phasing in capacity later.

The same logic applies to adding capacity at existing sites. Activating a second frequency band on an already-running radio increased throughput by 14% while raising total power by 25%. A new radio brings its own 207-watt idle floor and only makes sense when the capacity need is large enough to justify it.

The server barely notices the traffic; but the cloud is hiding 130 watts

Higher up the stack, the energy picture is simpler, but with one significant exception.

The Distributed Unit server draws 280–285 watts at idle and barely moves under load: delivering 1,800+ Mb/s across all six radios added only 25 watts, a 10% rise for a massive increase in workload. The Centralized Unit on AWS was even less visible, increasing by less than one watt during active transmission. he radio dominates the energy budget, everything else is secondary.

The exception is measurement. Software monitoring tools (to be specific, cloud software tools based on CPU data) reported only around 150 watts for the DU server, against an actual draw of 280 watts. That 130-watt gap represents fans, memory, and power conversion hardware that runs below what virtualization tools can see. The same gap appeared independently at a second research testbed, suggesting it is a consistent feature of virtualized server deployments, not a one-off anomaly.

For operators using software dashboards to track energy use or verify savings, this matters. The numbers on screen are systematically undercounting actual consumption by a significant margin. Accurate figures require either a physical meter or a validated correction factor applied to software readings.

A simple formula that predicts power use with near-perfect accuracy

The research also produced a practical power model that predicts radio energy consumption for any configuration without measuring every scenario directly.

The model has three components: a fixed (e.g.152-watt) hardware baseline that never changes; an idle cost for each active frequency band (e.g. 55 watts for the primary band, 84 watts for the secondary); and a variable transmission cost that depends on how hard the power amplifiers are working. All three can be measured with a small set of simple tests that add minimal time to standard equipment commissioning. Once calibrated, the model predicts power consumption for untested configurations with less than 1% error. The practical value is in what it enables. Loaded into a RAN Intelligent Controller, the model can calculate the energy cost of any proposed network change before it is made, giving operators the foresight to optimize for efficiency rather than discovering the cost after the fact.

What operators should take away from this research

Six practical conclusions follow directly from the data.

- Keep carriers busy. Idle power dominates, so a fully loaded carrier is far more efficient than two half-loaded ones. Consolidate traffic before expanding. The efficiency gain from high utilization outweighs almost any hardware upgrade.

- Add frequency bands before adding radios. Activating an additional band on an existing radio shares costs already being paid. A new radio brings a new full idle cost.

- Build to the full configuration from the start. Server and cloud overhead is paid whether one radio is running or six. Three-sector deployments are about 28% more energy-efficient than single-sector ones. Operators expand over time, pay an efficiency penalty until they reach full build.

- Treat sleep modes as essential. Without features that physically power down idle hardware during quiet periods, low-traffic hours cost nearly as much as peak hours. These should be configured at commissioning, not added later.

- Do not trust software-only power monitoring. Virtualization tools underreport actual server power by around 130 watts consistently. Physical metering or a validated correction factor is needed for accurate accounting.

- Use the radio’s own power readings for day-to-day monitoring. Despite the server monitoring gap, radios’ self-reported figures are accurate to within 10% of ground truth, reliable enough for operational energy monitoring without additional hardware at every site.

Implications beyond individual operators

The findings and measurement methodology are being contributed to the O-RAN Alliance, ETSI, and 3GPP, which are developing specifications for how Open RAN energy efficiency should be measured and reported. Standardized methods matter; without them, vendor and operator efficiency claims are difficult to verify or compare, and as multi-vendor O-RAN deployments grow, a common measurement framework becomes essential for procurement decisions, regulatory reporting, and sustainability commitments.

The broader message is an optimistic one. The tools needed to manage O-RAN energy effectively, namely accurate measurement, validated models, network-level data already exist. The gap is not technological. It is operational. Deploying the tools, configuring the features, and treating energy management as a standard part of network operations rather than an afterthought. The data to drive that shift is now available.